So You Wanna Package Some Native Libs Into A NuGet Package

Into the depths of csproj and NuGet package management

January 21, 2024

While working on Dinghy, I’ve got a general flow that works. Namely, that there is a core .csproj Dinghy.Core (Library Project) that is the engine code itself, and there is another project, Dinghy.Sandbox (Executable Project), that references Dinghy.Core via a Project Reference.

The Sandbox project is meant to mock out the end-user experience of Dinghy and give me a general testbed for Dinghy features. Great. Additionally, the project reference to Core allows me to make changes in Core and immediately have access to the updated APIs in Sandbox without needing to go through a build process explicitly. Also great.

So what’s the issue?

Well, I want people to use Dinghy not as a Github repo where they have to clone down all of Dinghy’s source to get it to run, but that instead it can be used “normally” through Microsoft’s C# package manager, NuGet. You can pretty easily “turn on” NuGet packing either through Rider or Visual Studio UI, but also basically just adding this into your project’s csproj file:

<PropertyGroup>

<GeneratePackageOnBuild>true</GeneratePackageOnBuild>

</PropertyGroup>So you add that and bam, good to go, right?

Not so fast buddy.

For trivial C# only projects with only C# source in them, this probably works pretty okay. But Dinghy is a game engine which means it has some other things in the project that require it to run. Namely, native platform specific DLLs. Those DLLs are accessed through PInvoke (“Platform Invoke”) code, so function signatures that look like this:

[DllImport("sokol", CallingConvention = CallingConvention.Cdecl, EntryPoint = "sapp_quit"]

public static extern void quit();If you’re used to working with native libraries in C#, this so far is nothing new. Additionally, C# is smart about resolving DLL files when provided with just a name like "sokol". Internally, C# will look for libraries in your project that match some version of that. I say “some version” because the nomenclature for DLLs varies across platforms. On Windows the convention is just something like myLib.dll. But on macOS it’s libmyLib.dylib and on Linux it’s myLib.so. Dotnet knows this and as such looks for variations of that library name to resolve where to “go” when the actual PInvoke method is called.

Traditionally, if you’re just developing something with DLLs, you can just dump all your DLLs in the root of your project, and the DLLImport statement will know where to find those libs (root). You can even nest them all in a folder like libs such that you can do this (notice the library name):

[DllImport("libs/sokol", CallingConvention = CallingConvention.Cdecl, EntryPoint = "sapp_quit"]

public static extern void quit();This all works, so what’s the rub?

Well, for this narrow use case of local development, nothing. But remember how I said I wanted to distribute the engine via NuGet? Well for NuGet to understand your intention behind certain DLLs included in your library project, the folder structure in that project must conform to what NuGet expects for certain types of DLLs. There are three main flavors here, “compile”, “runtime”, and “native”. You can read more here . Compile/Runtime are mainly meant for managed DLLs, so ones that come from other dotnet packages. Instead, for Dinghy, I care about the Native option, as my included libraries are being built for specific platforms via C/C++ code.

The TLDR instead of trying to piece through the bowels of esoteric NuGet knowledge is that, when someone builds their project that depends on your package, you (presumably) want them to reference the correct DLLs for the target they are deploying on, and that NuGet will properly move the dependent DLLs to the correct place in the final build output. To do this, you have to respect this folder structure in your library project. For native, it’s something like this:

.csproj

src/

runtimes/

|___osx-x64/

|___native/

|___libmyLib.dylib

|___win-x64/

|___native/

|___myLib.dllSo let’s put the libraries under a similar structure in our library project. We run the Sandbox and… uh oh. DLLNotFound errors abound. Which makes sense right? Consider the above:

[DllImport("libs/sokol", CallingConvention = CallingConvention.Cdecl, EntryPoint = "sapp_quit"]

public static extern void quit();The path is wrong. Let’s update the path for macOS based on our new directory structure:

[DllImport("runtimes/osx-x64/native/sokol", CallingConvention = CallingConvention.Cdecl, EntryPoint = "sapp_quit"]

public static extern void quit();We run the Sandbox again and it works — awesome. But… now we assume the library is in osx-x64. If we run on Windows… oh look who it is DLLNotFound. You may think that we can use a variable string and switch based on the Environment, but the string inside the DLLImport statement needs to be constant. So maybe then we can do some nasty ifdef code like:

static const string myStr =

#if MAC

"runtimes/osx-x64/native/sokol.dylib";

#else

"runtimes/win-x64/native/sokol.dylib";

#endif

[DllImport("myStr, CallingConvention = CallingConvention.Cdecl, EntryPoint = "sapp_quit"]

public static extern void quit();This works mostly but is also not great because it means you’re now forcing flags on end users for conditional compilation, or doing it all yourself and making a bit of a mess around the build process.

Around about this time is when I discovered two blog posts that helped me figure out what I should do:

https://www.lostindetails.com/articles/Native-Bindings-in-CSharp

https://nietras.com/2022/01/03/bendingdotnet-move-native-libraries/

The latter is more of a parallel problem that actually references the former, but the former is the one that’s pretty close to what I actually need.

The solution, to get around ifdef-ing all your lib names, is to leverage the NativeLibrary.SetDllImportResolver() method to basically instruct dotnet where to… Resolve DLL Imports (well named function, that one). So instead of needing to explicitly state the path, you can effectively intercept the DLLImport name resolution and point it to the right place. Combine that with Environment switching and you get this (read the article for context):

internal static class NativeTesseractApi

{

static class Constants

{

public const string PlaceHolderLibraryName = "NativeTesseractLib";

public const string WindowsAssemblyName = "libtesseract-4";

public const string LinuxAssemblyName = "libtesseract.so.4";

}

static NativeTesseractApi()

=> NativeLibrary.SetDllImportResolver(Assembly.GetExecutingAssembly(), DllImportResolver);

static string GetLibraryName(string libraryName)

=> libraryName switch

{

Constants.PlaceHolderLibraryName => Environment.OSVersion.Platform switch

{

PlatformID.Win32NT => Constants.WindowsAssemblyName,

_ => Constants.LinuxAssemblyName,

},

_ => libraryName,

};

static IntPtr DllImportResolver(string libraryName, Assembly assembly, DllImportSearchPath? searchPath)

{

var platformDependentName = GetLibraryName(libraryName);

IntPtr handle;

NativeLibrary.TryLoad(platformDependentName, assembly, searchPath, out handle);

return handle;

}

[DllImport(Constants.PlaceHolderLibraryName)]

public static extern IntPtr TessBaseAPICreate();

...

}Nice! We get effectively a const switch and no need for platform defines. We stick that bad boy in the Engine library project, and see that Sandbox works! Now we pack the library as a NuGet package, publish for osx-x64 and… uh oh. What’s this?

Our libraries are no longer in runtimes/{rid}/native… But NuGet is smart right, it knows to change the DLL paths right? Right?

Like a good friend, DLLNotFound appears once again, letting us know that we’re still messing up. Going back to the linked NuGet doc page on native libraries I now better understood this callout:

The .NET SDK flattens any directory structure under

runtimes/{rid}/native/when copying to the output directory.

So… what can we do? How can we reference a DLL where its path shifts as it moves from being a consumed library project into a published project? Well with the current operating “model” of the problem, it feels like a dead end. But surely there is an answer…?

Big, BIG shoutout to reflectronic on the C# Discord here for helping me work through all of this, and then come up with a big insight that seems so obvious in retrospect. Specifically: if all the Dinghy users are consuming Dinghy through NuGet and Sandbox is a bespoke sandbox not meant to be replicated directly for end users (it literally links to the Dinghy csproj file), then the Sandbox project itself can be “more special” in order to get it to work.

Knowing this we tried a few things, including a valiant effort by reflectronic where we basically hijacked the deps.json file of a different NuGet package to spoof our native libs as if it were its own, but that ultimately didn’t work. I slept on the problem for a day and woke up with an insight, and also something that may be obvious to C# greybeards but wasn’t something I properly understood until now.

Let’s talk about the two not-initially-obvious-to-me things first.

First, I thought .nuspec and .csproj files were related, and they basically aren’t. I’m heavily paraphrasing here but basically a .nuspec file is package information, and .csproj controls build output of the library itself. When a .csproj file talks about “Output”, IT IS NOT TALKING ABOUT THE NuGet PACKAGE . Maybe some enterprise C# people are like “duh, bro” but I was forged in the fires of Unity C# so all this stuff is pretty new to me (also if you’re wondering if Unity made their own NuGet the answer is laughably yes). You can do package things in the .csproj file (more at the very bottom of this post) and you can probably do build things in the .nuspec file, so there is some cross-pollination. Is it confusing? Yes. Is it useful? Maybe? Do you wish there was just one way to do this? Yes.

Secondly, It was my understanding up until I solved this that if a Project had PInvoke code inside of it (like the DLLImport stuff above), the compiled library of that Library (eg. myLib.DLL) was the “thing” that actually did the PInvoke and hence “used” the PInvoke code.

This is not the case. In the case where you have some project that consumes the DLL that has PInvoke code in it, the new assembly is the one actually doing the PInvoke code, not the library with the PInvoke code inside of it. This means in the case of the Engine/Sandbox divide, the Sandbox project is the one that is actually resolving the PInvoke code.

A brief pause here while we ponder what the implications of that are for our problem. You now know enough to get the same working answer I found. Ready?

We can just move the DLLImport resolver code to the Sandbox instead of the Engine project!

We only need to change one line of the resolver (as well as move the code over to the Sandbox project), in order to make sure we are grabbing hold of the correct libraries that we want to reroute:

static NativeLibResolver()

{

//used to assume we were the lib with pinvoke code

// NativeLibrary.SetDllImportResolver(Assembly.GetExecutingAssembly(), DllImportResolver);

//update to indicate that we want to resolve the Engine assembly types differently

NativeLibrary.SetDllImportResolver(typeof(Dinghy.Scene).Assembly, DllImportResolver);

}Also as a quick side note here — the blog post that provided the resolver has it in a static class where the ctor of the class (above) setups up the resolver. I had issues with this “just working” because I think the NativeLibResolver would never statically init itself until I explicitly requested something from it. To this end I added an empty

Kickmethod that I call at the top of Sandbox to make sure the resolver sets everything up properly:public static void kick(){}

Because the Sandbox is the project that is in charge of actually resolving DLL import paths in the special context in which we are using the Sandbox, we can use the resolver so the Sandbox can route to the correct lib folder. The “correct” folder here now manifests from another change, based on the first bit of insight.

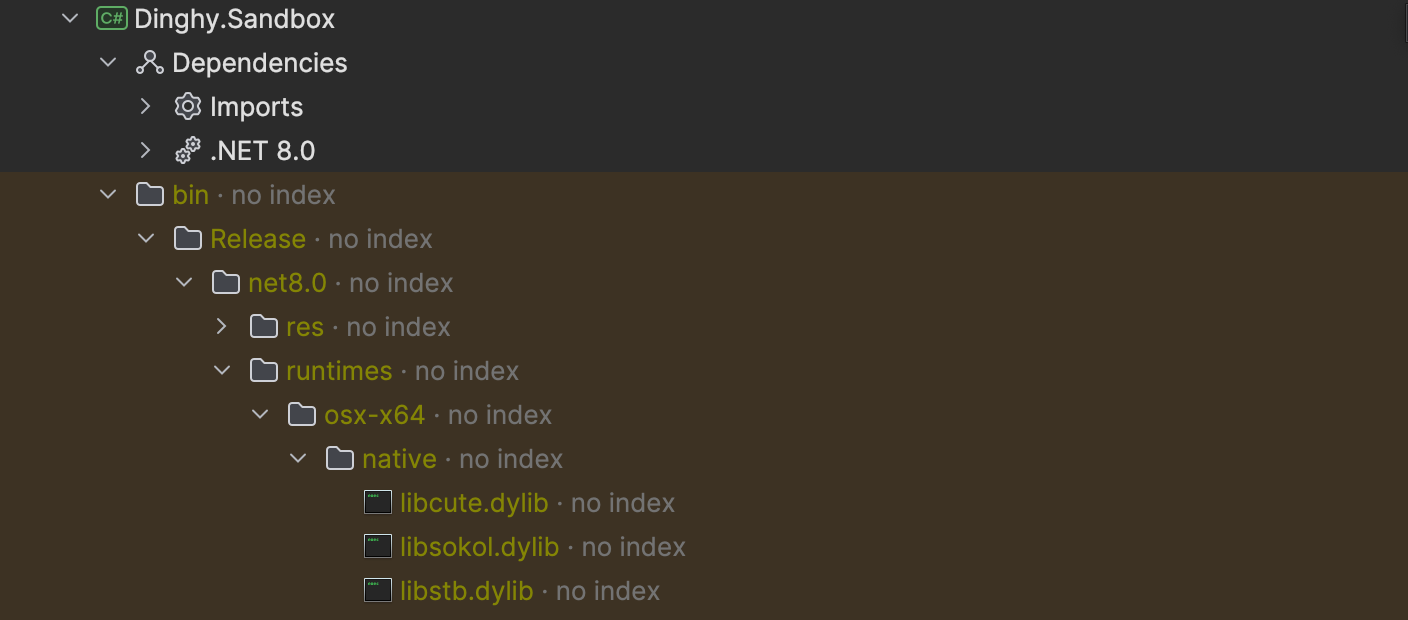

I had assumed <CopyToOutputDirectory> in the .csproj settings was just copying the libs to the NuGet package and bloating the package. This is not the case. The NuGet package doesn’t care that flag. So I added this into my Engine .csproj file:

<ItemGroup>

<Content Include="runtimes\**\*">

<CopyToOutputDirectory>Always</CopyToOutputDirectory>

<PackagePath>runtimes</PackagePath>

</Content>

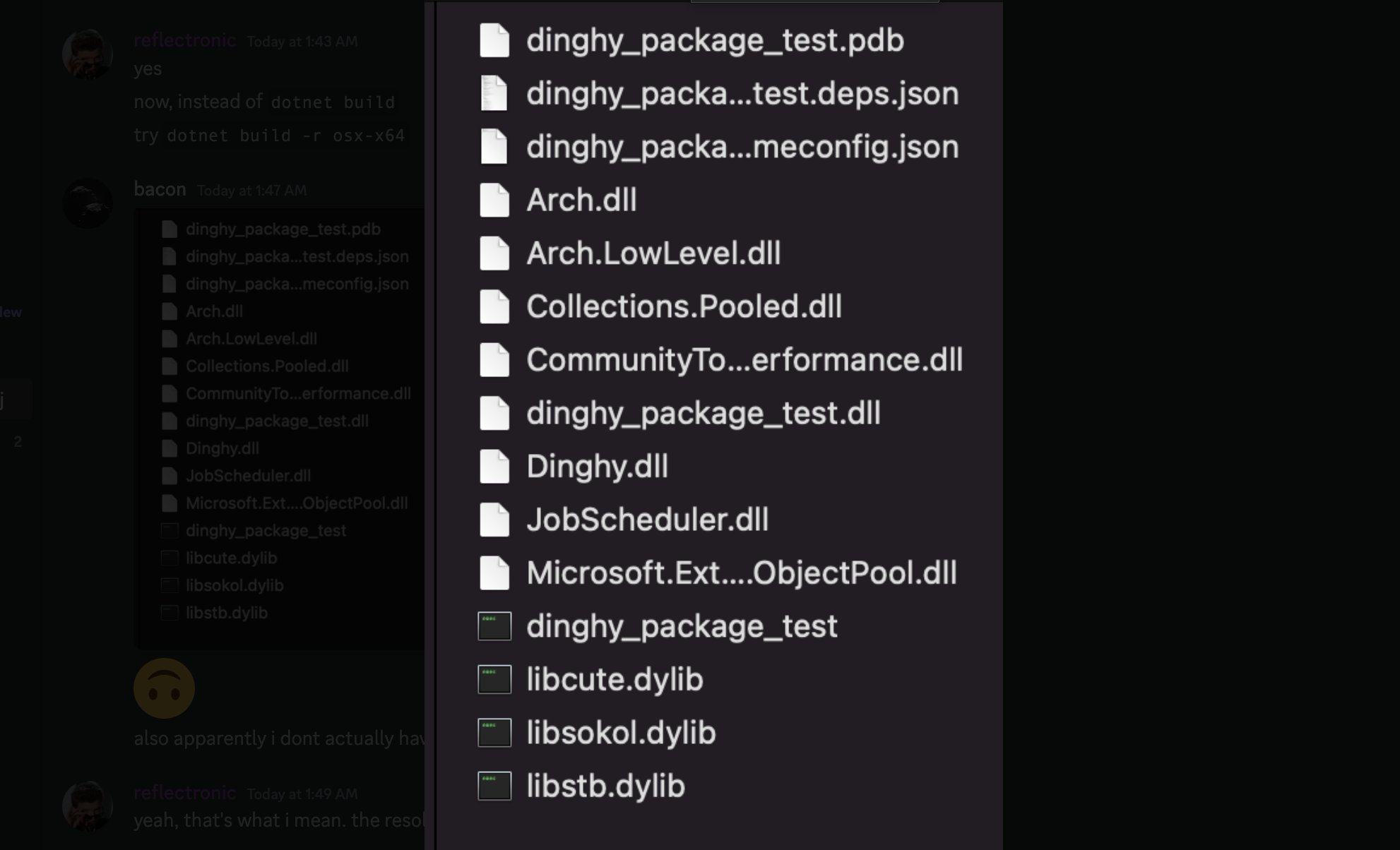

</ItemGroup>When the Sandbox builds, the libs are spit out into the bin of the sandbox like this:

This is perfect for our resolver! And just to double back and check, what do the actual DLLImport statements look like on the Engine PInvoke functions? Check it out:

[DllImport("sokol", CallingConvention = CallingConvention.Cdecl, EntryPoint = "sapp_quit")]

public static extern void quit();We can still use the “simple” library names because they now work for the import resolver on the Sandbox project, and also work all the way at the end of the NuGet publishing process because the libs are spit out at the root of the build output.

Notably, if for some reason the Engine project called itself this would break (I think). However given that it’s a library project, some other project has to call this and hence that other project is either our Sandbox (which we handle with the Resolver), or an end-user project that would consume us via NuGet. One limitation would be that if I wanted to give someone the raw engine DLLs directly without NuGet, they would need to similarily include their own resolver. This previously linked post touches on a similar issue and how you would fix it.

WHEW — we did it. This post ended up being way longer than I expected but I hope it helps if you’re on a similar quest. Coming from Unity-centric gamedev there is very little visibility/knowledge on the finer points of NuGet/csproj stuff so I’m trying to do my part here and spread the knowledge. In part because the initial ask seems so easy/common:

I want to be able to work on a library project with native DLLs in a sandbox project, and also have the ability to pack the library project to distribute to other users.

I’m shocked to find out how much of a tall order that was, but am glad that ultimately I have a solution that I think works pretty well!

Anyways, thanks for reading, and till next time! (But also read below the break for alternate approaches.)

Knowing what I know now I think there are also maybe other ways to do this.

Figuring out the above was hard in part because I didn’t understand initially what the problem actually was or how to ask for it (Google, Discord, SO, ChatGPT, etc.) in a legible way to get an answer that I was looking for. I didn’t really understand NuGet package packing, project vs. NuGet references, etc.

However now I think two main other approaches seem viable:

One is something that is suggested in the linked NuGet doc section on packaging native assets

When packing with NuGet’s MSBuild Pack target, you can include arbitrary files in arbitrary package paths using

Pack="true" PackagePath="{path}"metadata on MSBuild items. For example,<None Include="../cross-compile/linux-x64/libcontoso.so" Pack="true" PackagePath="runtimes/linux-x64/native/" />.

So instead of pre-building the desired NuGet directory structure for native assets and routing the PInvoke calls in the Sandbox, you dump the libs wherever (probably project root) and then in the .cspr oj of the library project you use the code above to route the libs to the correct folder at packing time. Note that this doesn’t affect the lib locations at all for projects like Sandbox that would consume the library.

Another option is to do a similar thing, but in the nuspec file. You declare each file’s location in the project and set its target to the correct RID folder:

<file src="..\ARM\Debug\ImageEnhancer\ImageEnhancer.dll" target="runtimes\win10-arm\native"/>Seems easy enough. Like the above answer, this doesn’t affect consuming packages.

Personally, I think I’ll probably stick to the “Sandbox does it weird” way (for now), in part because it keeps the library itself nicely organized around what it’s final output will be for end users.

Okay now that’s actually all — thanks for reading!

Okay but also seriously you should read some of the Github issues around native libraries and NuGet. It’s a quagmire and there is basically no “official” way to do any of it:

Published on January 21, 2024.